September 2019

Summary

The Programme for International Student Assessment (PISA) aims to ‘help governments shape their education policy’. Every three years a sample of 15-year-olds from around the world are evaluated over three different domains; reading, maths, and science. For the 2015 study, students were also assessed for their collaborative problem solving (CPS) skills. The assessment of CPS involved individual students collaborating with computer-based partners in a simulation of a real-world activity (Shaw and Child, 2017).

Data from PISA has been used to make decisions about the way GCSEs are administered and awarded. However, PISA and GCSE are very different assessments and serve different purposes. Judgements on one of these assessments based on the other are dependent on the assumption that both are closely related.

In this Data Byte we look at the correlation between individual students’ performance on PISA and GCSE to show the extent to which PISA measures the same skills as GCSE subjects.

What does the chart show?

The Department for Education provided data from the PISA 2015 study for participants in England matched to their performance across a large number of GCSE subjects, the vast majority of which would have been assessed in summer 2016. This data set was anonymised so that no individual student could be personally identified. The full data set contained information on 5,194 students from a total of 206 schools.

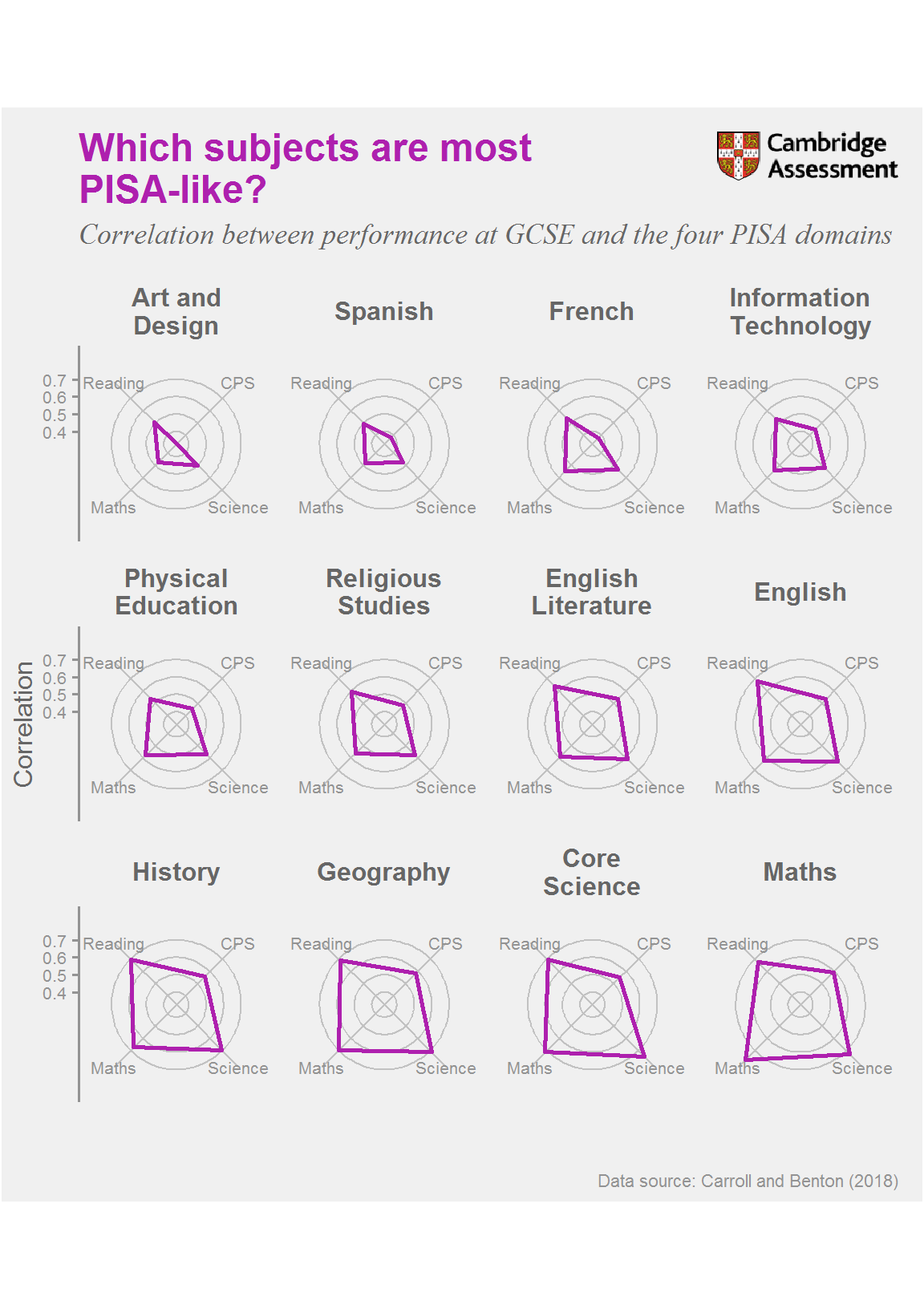

The chart shows the correlation between students’ performance at GCSE and over the four PISA domains. The subjects have been ordered by their average correlation with the PISA domains. We have shown subjects with more than 750 matched students and only included Core Science, so that the chart is not dominated by science subjects. The scale is restricted to the observed values to allow easy comparison between GCSE subjects. Details of our analysis and the table of correlations that the chart is based on can be found in our report.

Why is the chart interesting?

In absolute terms, the correlations between performance in the PISA domains and at GCSE are only moderately strong, indicating that the assessments measure different skills. The subjects most strongly correlated with PISA across all four domains are Maths, Core Science, Geography and History. The subjects most dissimilar to PISA are Art and Design, Spanish, French and Information Technology.

As may be expected, the largest correlation between a PISA domain and a GCSE subject is between PISA maths and Maths GCSE (r = 0.777) and the next strongest between science and Core Science (r = 0.748). Hence, for maths and science, the strongest correlation is with the most relevant GCSE subject.

Subjects that may have been expected to correlate with reading scores were actually relatively weak: English (r = 0.680) and English Literature (r = 0.637) showed weaker correlations than History (r = 0.696), Core Science (r = 0.692) and Geography (r = 0.687). This indicates that PISA reading measures different skills to those assessed by GCSE English. In particular, this may relate to the fact that none of the PISA domains measure skills such as essay writing, that are both taught and assessed within GCSE English.

Collaborative problem solving correlations with GCSE performance were substantially lower than those from other domains. Maths (r = 0.593) showed the strongest correlation of individual subjects, but this was still relatively weak. Hence, none of the GCSE subjects in the chart particularly measure the skills assessed by PISA collaborative problem solving. This is notable as collaborative problem solving was the PISA domain in which England’s performance was the strongest relative to the OECD average (Jerrim and Shure, 2017). In other words, England’s strongest performance came in a domain that does not appear to relate to what is actually taught and assessed as part of GCSEs.

Further information

Full details on the data and methods behind this chart can be found in The link between subject choices and achievement at GCSE and performance in PISA 2015 by our researchers, Matthew Carroll and Tom Benton. Tom Benton presents the key findings from the report in this video.

Jerrim, J. and Shure, N. (2017). Achievement of 15-Year-Olds in England: PISA 2015 Collaborative Problem Solving National Report. London: Department for Education DFE-RR765. Available from https://www.gov.uk/government/publications/pisa-2015-national-report-for-england.

Shaw, S. and Child, S. (2017). Utilising technology in the assessment of collaboration: A critique of PISA’s collaborative problem-solving tasks. Research Matters: A Cambridge Assessment publication, 24, 17-22. Available from http://www.cambridgeassessment.org.uk/Images/426176-research-matters-24-autumn-2017.pdf.

1. Rather than from 0 to 1.↩