Choice is usually promoted as being a good thing, with its connotations of freedom and autonomy. Consumer choice (e.g. of supermarket, or energy supplier) is relatively unproblematic. Viewer choice (of television channel) is slightly further along the scale – even if it increases overall quality (and not everyone would agree it does) perhaps it does so at the expense of making it more difficult to locate good programmes. Going further along the spectrum we are often told of the virtues of parental choice (of school) or patient choice (of hospital) – but this is much more debatable. It has been argued that people don’t want the possibility of choosing a poor school or hospital; they just want their local one to be good.

When it comes to exams, schools have a choice of exam board (now known as Awarding Organisations, or AOs). However, employers and further/higher education institutions are not usually interested in the AO from which a student’s result was obtained, treating their grades as equivalent and their syllabuses as interchangeable. In order for this to be justifiable, the AOs are heavily regulated, with inevitable convergence in syllabus content and assessment models.

Having chosen their exam board for a particular subject qualification, in some subjects there is often a further choice for schools to make about which topics to teach. This occurs when it would not be possible or desirable to teach all the topics on the syllabus in the time available on the course, and thus the syllabus sets out a choice of topic options. Examples include: A level History where there is often a choice about which country or time period to study; English Literature where there is a choice of texts; Religious Studies where there is a choice of religions. Depending on the arrangements for specific assessments, this choice might be indicated by schools at the time of entering their students for the assessment, in which case the students will only be presented in the examination hall with papers containing questions on the topics they have studied, or it might be indicated by the students themselves at the time of the examination, which involves them recognising which topics they have studied or prepared for and (presumably!) choosing those.

Arguments in favour of giving teachers choice about which topics to teach might include providing them with the chance to exercise professional autonomy and capitalise on their own interests and specialisms; or to adapt their teaching to the local context (e.g. students in schools in coastal locations might be more interested in coastal geography); or (more mundanely) that their school might only have the resources (such as textbooks) for teaching certain topics. If different teachers and schools are making different choices here it would provide diversity and breadth of knowledge when the national cohort is considered as a whole. However, in practice there may be much less diversity than is in principle possible, as summarised in the ‘Hitler and the Henrys’ headline prompted by Cambridge Assessment research into topic choices in A level History.

Sometimes schools have a choice of assessment model (which components of an assessment to enter) – for example whether to enter students for a science practical assessment or to choose an alternative written exam paper assessing (some of) the same skills. This choice is often a feature of international exams such as Cambridge International’s IGCSE in science subjects. A final choice for schools in some qualifications such as the GCSE is the choice (in some subjects) of which tier to enter a student for. We discussed some of the issues relating to tiering in a previous blog post.

Once their schools have chosen which components of the assessment the students will take, the students themselves are then sometimes faced with a choice in the examination hall. Our recently published article describes three different categories of choice here:

1 – a restricted choice for the student (imposed by the teacher), where the student has only been taught some of the topics that might appear on the examination, either because the assessment was designed that way (i.e. it was not intended that every possible topic should be covered, as described above), or because the teacher has decided to maximise teaching of the minimum number of topics necessary for the exam.

2 – a restricted choice for the student (self-imposed), where the student has been taught every topic that could potentially be assessed but has only chosen to prepare for certain topics, in the hope or expectation that their chosen topics will appear. This limits their actual choice in the examination.

3 – a completely free choice for the student, where the student has been taught, and has prepared for, every topic that could potentially be assessed and chooses the questions that they think they will score best on.

What are the arguments for allowing the students themselves to choose questions in the examination hall? The most obvious one is that it could allow students to show themselves in the best possible light – but of course this assumes that students have sufficient awareness of their own strengths to pick the questions they will do best on. The research evidence on this is mixed, as discussed in our article.

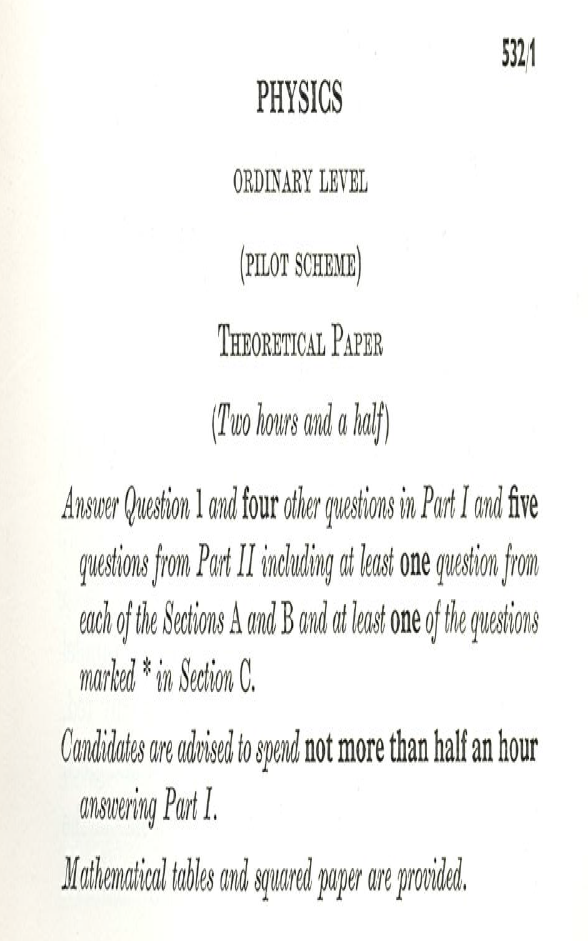

One disadvantage of allowing choice is that it can give rise to complex instructions explaining to students which questions they should answer. In the already stressful situation of an exam, arguably we do not want to increase the pressure on students by presenting them with complex instructions (although things have got a bit better since the example from the 1960s shown in the picture!)

(Pictured above) Extract from an O level Physics paper set by UCLES in July 1967. Source: Cambridge Assessment Group Archives

A more serious objection to student choice is that it effectively creates a (potentially very large) number of different exams within an exam. Are these different ‘virtual exams’ all equally difficult? AOs are able to compensate for any difference in difficulty of different exam papers by setting different grade boundaries, but when the different exams are virtual ones created by the exercise of choice, there is usually no such compensation. It seems somewhat contradictory to acknowledge that sets of questions can differ in difficulty (by allowing grade boundaries to differ on exams containing only compulsory questions) but then to implicitly assert that when questions are optional they are equally difficult. However, trying to calculate statistical adjustments that somehow allow for any differences in difficulty of optional questions is very problematic, as discussed at length in our article, which could explain why this is not usual practice in assessments in England. Instead, efforts are made to try to make optional questions as similar in difficulty as possible, such as by using questions that are similar in form, asking students to do a similar task but in relation to the different topics/religions/authors/time periods etc. (e.g. ‘Describe one attitude that some [Buddhists/Christians/Hindus/Muslims/Jews/Sikhs] might have towards human rights’).

In summary, allowing question choice in an exam can reduce the validity of inferences about what students know and can do if they are only taught, or only choose to revise, a subset of the topics that were intended by the designers of the syllabus to be covered during teaching and learning. It can create unnecessarily complex instructions about which questions to answer, it assumes that students can make good choices, and it potentially creates problems of comparability. Therefore it is better to avoid question choice within a paper unless there is good reason to include it, and if choice is necessary, care needs to be taken to make the task demands as similar as possible. In recent decades there has been a reduction in the opportunity for students to choose which questions to answer. We would recommend that AOs produce a rationale showing how the benefits of allowing choice outweigh the drawbacks in those cases where question choice is a possibility.

Tom Bramley and Victoria Crisp

Research Division, Cambridge Assessment

Before you go... Did you find this article on Twitter, LinkedIn or Facebook? Remember to go back and share it with your friends and colleagues!