In this blog, former Teacher and Senior Professional Development Manager at The Assessment Network, James Beadle, shares his expertise on the theory behind constructing good multiple-choice questions.

What are multiple-choice questions and why are they useful?

Multiple-choice questions (MCQs) ask learners to choose the correct answer from a list of options.

They can be an incredibly useful tool in summative and formative assessment contexts as they provide a quick way of assessing learners’ understanding of concepts that otherwise might require a longer, free text response.

If you want to assess, for example, whether students understand the meaning of a particular word you can either:

- Ask them to write down the meaning, which will likely lead to a wide range of varying answers.

- Or you can efficiently ask them to select the correct meaning from a list of possible choices.

As such it’s not surprising that they’re used in a wide range of assessments, from driving theory tests to entrance examinations for US universities.

Constructing good MCQs

The use of MCQs however, does not come without risk. We often see high-profile cases where a poorly constructed multiple-choice question captures media attention and sparks debate about fairness. To illustrate how easily this can happen, consider the following hypothetical example from a reading comprehension task:

Example question:

He wriggled back inside the cave…

What does this tell you about how Joseph got inside the cave? Tick one.

- A) He sprinted quickly inside.

- B) He jumped through the opening.

- C) He had to squeeze in.

- D) He snuck in quietly.

The ‘correct’ answer is intended to be “he had to squeeze in”, but you may think that the answer “he snuck in quietly” was equally valid.

When creating multiple-choice questions, it is essential that the distractors (incorrect options) are clearly wrong (unless the question says something along the lines of “Tick the one that best describes how Joseph got back inside the cave”).

In the context of this question, most people would agree that sprinting or jumping are not synonymous with wriggling.

However, you may question:

- Can you sneak into somewhere by wriggling?

- Why do two of the options contain adverbs, and two do not?

- Should students infer that the two answers with adverbs are both incorrect, given that they add a context not present in the question itself?

This question is likely ‘difficult’ – but is this difficulty desirable? As assessment practitioners, we need to recognise that difficulty comes in many forms, some of which might support our goals for the assessment and associated learning, and others that might undermine it.

If, for example, the last option had instead been “He cycled into the cave”, it is probable that students would have found the item significantly easier, perhaps to the extent that the question no longer discriminated between students of different abilities.

In any exam, as a learner we cannot know what was in the mind of the question writers when this item was written.

However, it’s important before any items are used that they are built with the principles of assessment firmly in mind.

Multiple-choice questions in teaching

Of course, multiple-choice questions don’t just exist within the world of high-stakes summative assessment. During my time in the classroom as a mathematics teacher, I became increasingly aware of just how extremely powerful they can be as formative tools, revealing not only what our students know, but also, just as usefully, what they don’t know.

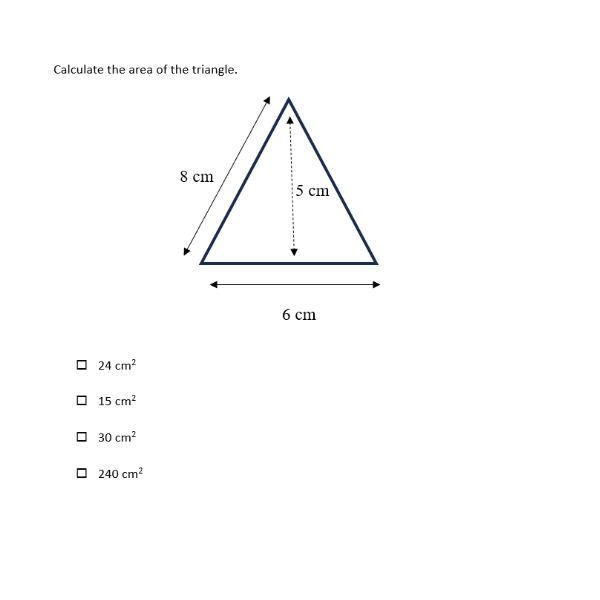

Consider the following question:

The correct answer is 15 cm2. Importantly, each incorrect answer highlights a possible misconception. An answer of 24 cm2 comes from incorrectly using 8 cm as the height of the triangle. Students giving an answer of 30 cm2 have probably forgotten that a triangle’s area is half of its base multiplied by its height. The answer of 240 cm2 comes from multiplying all the values together, which is instead how the volume of a cuboid is calculated.

With each incorrect answer, the teacher knows the misconception behind it, allowing them to give immediate, powerful feedback to their students. In a summative exam, this question is perhaps best given as an open response item, so that each student’s mathematical workings can be seen and appropriately credited. But in a classroom, the multiple-choice format, if used wisely, allows the teacher to gain the same level of knowledge of students’ understanding as if they’d marked each response individually, yet it can be delivered in a fraction of the time.

To quote Dylan Wiliam and Siobhan Leahy, “the shorter the time interval between eliciting the evidence and using it to improve instruction, the bigger the likely impact on learning”. In other words, the quicker we can give feedback to students, the better.

Multiple-choice questions provide an excellent means to achieve this. These can be effectively delivered in the classroom in a wide range of ways: whether it be using an online platform to send the question to each student’s electronic device or simply displaying it on a PowerPoint and having students answer using mini-whiteboards. The key thing is that as a teacher, you can identify misconceptions as quickly as possible and then take immediate steps to correct them.

If you want to begin improving your own multiple-choice items, here are the five questions you should ask yourself first:

- What specific piece of knowledge, understanding or skill do I wish to assess?

For example, we may wish to assess learners’ abilities to correctly use ‘a’ and ‘an’ in the English language.

- What misconceptions might my learners have around this topic?

In this case, a common misconception is that ‘a’ is used before consonants and ‘an’ is used before vowels. However, ‘a’ should be used before consonant sounds (like university) and ‘an’ should be used before vowel sounds (like human).

- What closed response question can I ask that assesses this topic?

So we could ask: In the sentence ‘This saddle is made for ___ horse’, should I use the indefinite article a or an?

- Does my question reveal likely misconceptions? If not, how can I change it so that these misconceptions are given as plausible options?

In our prior question, we were not addressing what should be done before consonant sounds – it doesn’t really reveal the complexity of misconceptions, as it’s a single specific example. A better question would be:

Which one of the following sentences is written correctly?

- a. It took me an hour to get to work this morning.

- b. The student was wearing an uniform.

- c. This saddle is made for an horse.

- d. I was bitten by a ant.

Here each different option reveals a different misconception. a) is the key (correct answer). Each other response highlights a misconception – for b), learners have used ‘an’ because ‘uniform’ starts with a vowel. For c), learners have likely used ‘an’ because they think horse starts with a vowel sound. For d), learners have used ‘a’ because they have misidentified that ‘ant’ starts with a consonant sound.

- Could a learner get the correct answer if they possess one of the misconceptions?

The answer to this question should be no, which is the case for the question above. However, if we had chosen the following sentence as the key ‘This bowl contained an apple’, then learners may have possessed the misconception that an always goes in front of with vowel sounds, and then have been torn between a) and b), before ultimately choosing a) as a guess – so the item would have a 50% chance of not revealing that particular misconception.

- Closed response: A question format where learners chose from a set of responses (e.g multiple choice), or where there would only usually be one acceptable response (such as a simple mathematics calculation).

- Difficulty: How hard an item is for learners; difficulty can be desirable or undesirable depending on what is being assessed.

- Distractor: An incorrect option designed to be plausible for learners who hold a particular misconception or misunderstanding.

- Formative assessment: Assessment used during learning to identify understanding and misconceptions and to adapt teaching accordingly.

- High-stakes assessment: An assessment with significant consequences (e.g., progression, certification, selection).

- Item: A single assessment question (e.g., one MCQ).

- Key: The correct answer (option) that best answers the question, reflecting the intended knowledge, skill or understanding being assessed.

- Misconception: A common incorrect idea or procedure that leads to a wrong answer; well-designed distractors can reveal specific misconceptions.

- Multiple-choice question (MCQ): A question format where learners select the correct answer from a set of options.

- Option: Any of the answer choices presented in an MCQ (normally one correct answer with the rest being incorrect answers, or distractors).

- Open response (free-text response): A question format where learners generate their own answer rather than selecting from options and in which there are usually a range of acceptable responses.

- Stem: The prompt of an MCQ (the question or incomplete statement that the options respond to).

Develop your MCQs

Whether in the classroom or in a different assessment context, multiple-choice questions can be a powerful tool for assessment. If this is a tool you want to further develop for your own practice, we highly recommend our upcoming workshop series: Writing and evaluating effective multiple-choice questions.

References

Embedding formative assessment: Practical Techniques for K-12 Classrooms, Dylan Wiliam and Siobhan Leahy, 2015.